The Keynote

My first GTC was not my first keynote. Being a gamer I’ve seen Jensen get on stage and talk about DLSS, the new GPUs etc. so seeing the man in the leather jacket was nothing new. However, each year there is a new excitement with what he would speak on. This year with supply-chain-issues, the rampant expansion of Gigafactories (including multiple in my state of Texas), and adoption of AI led me to think this could be the next stage with GTC, if Jensen did it right.

Within the first 5-10 minutes I realized this wouldn’t be the case. Jensen got up and started talking about the different changes, and growth with the company, and man did I just lose interest immediately. Everyone likes a victory dance when its your team, but when your not interested your just playing to the crowd, and I have to admit thats how it felt. To be fair this isn’t the first time we’ve seen this. AMD’s CES 26 keynote was almost exactly this, as it focused solely on hyperscaler AI solutions, and none of it really on the consumer(At CONSUMER electronics show). I was just annoyed at this whole thing, but since its happened before I let it slip, hoping to hear the next thing. To be fair, the keynote was really cool stuff, incredible technology, and just the most amazing feats that enterprises could want. However, none of the tech was for enterprises, again, it was all dedicated to full Datacenter creation on DGX with their technology. While most enterprises are still struggling to understand what can be done to start their AI journey, Jensen instead dedicated the majority to the 10-20 public clouds, and neo clouds that were out there to advertise his solution.

Another part of the keynote I was lost in was “Tokenomics.” Yes, tokens are really the lifeblood of AI. The problem I had with tokens as a measure of cost, is that it has no measurable cost outside of power. To me, and I could definitely be off-based on this, Cost-per-token is a measurement that NVIDIA uses to prove that their GPUs and full solutions are best for running AI. Great! We already knew that… Again, I chalk this up to hyperscaler pandering.

NemoBot brought me back though. As a big Openclaw cheerleader, seeing nemoclaw was a great moment. To me, NemoClaw is the “OpenShift” moment for AI. If you think back to when kubernetes was getting accepted to enterprises, they started finding a lot of security issues. True these security issues were no where near as bad as OpenClaw. Kubernetes had openings that were dangerous, where OpenClaw feels like your giving keys to the kingdom to a hyper-active teenager who doesn’t know you enough to know what NOT to do. NemoClaw flips OpenClaw, same way OpenShift flipped Kubernetes. This means instead of starting with full rights. You start with no rights, and start opening capabilities as you go, rather than having everything open by default at the start. If OpenClaw/NemoClaw truly becomes the OS of AI, then that opens MANY doors for the world to start playing with AI, building agents and using them in daily tasks. I’ll take one note here to say, “what if CPUs could run your OpenClaw tasks locally on a laptop instead of a GPU? Thats what AMD/INTEL wants, and could be an inflection to a new phase of AI adoption.”

EXPO

Walking the expo felt surreal, as there was a easy to spot gap between normal utilization of AI solutions from hyperscalers and dedicated AI use, and Specific AI softwares. Basically what I mean, is there was a huge gap of “Start here.” Tons of robots walking around, tons of digital people, and computer vision all over the place, but thats the regulated, and understood solutions that are fun to see. GTC is for the squirrels of IT, they want the next shiny thing, and they chase it. Most Enterprises are not like this. They are change resistant and want to ensure that what they do has real ROI to their environment. I just found it interesting how the expo didn’t really meet the customers but rather was “LOOK AT THE COOL STUFF” which was cool, FOR SURE, but didn’t really move the needle to AI adoption.

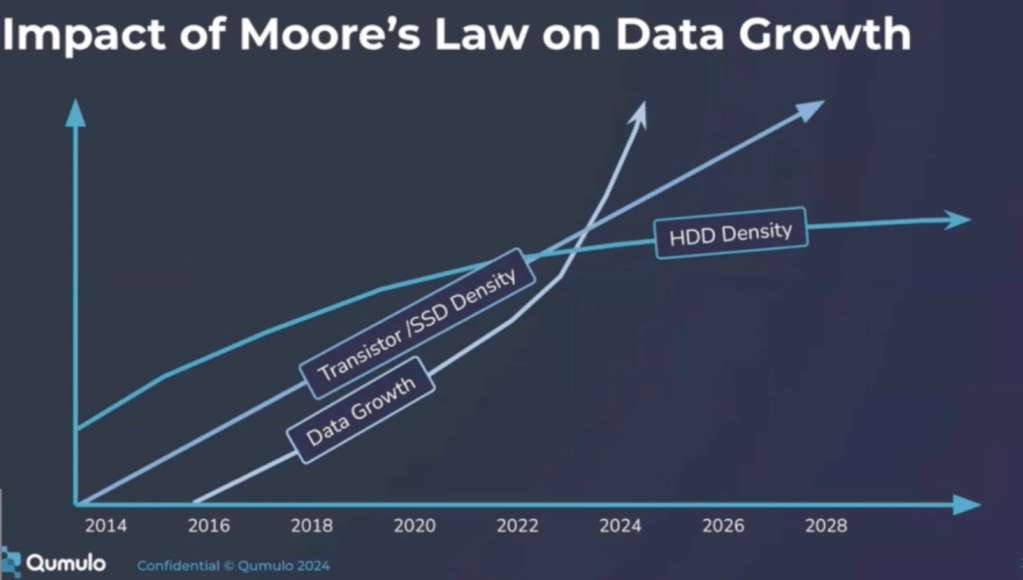

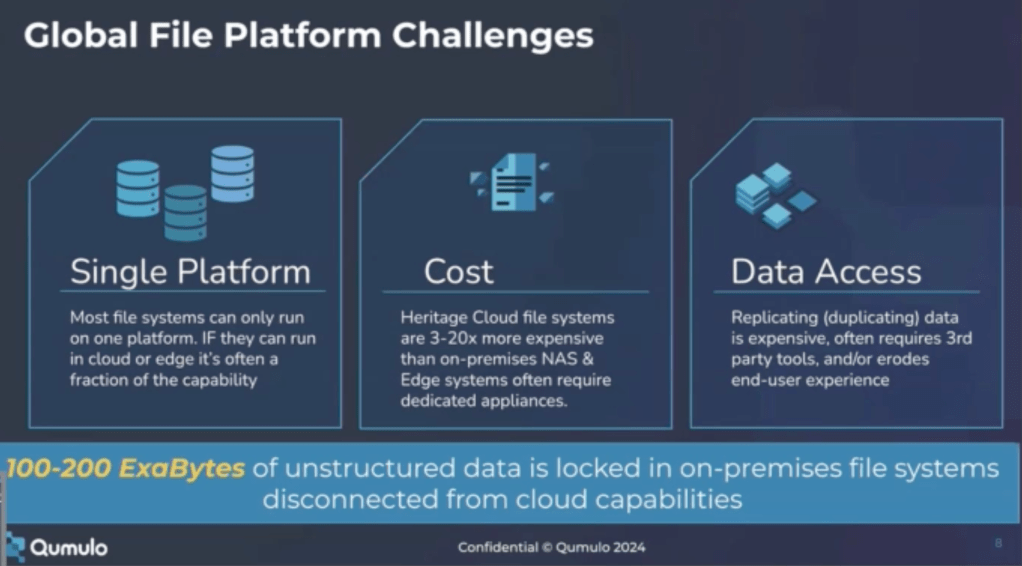

On the expo floor, I spoke to a number of solutions that had answers to problems customers dont know they will have, and more interestingly problems that dont really have answers to. For instance, context windows storage was one issue I discussed in length in how, getting a customer into the environment and start using AI was a great place to be, however, in the long term of using the solution, that storage and management needs to be addressed through software integration and hardware. At a time of supply chain, this is another pain point, one I’m sure would have been great to attack.

It was truly mesmerizing that the supply chain was not mentioned once. Not on the stage, not on the expo floor, no where that I was (But I was doing booth duty quite a bit of the show). To me this highlighted just how many partners, and people who want to be partners were at the show. I went to a session and heard a conversation about two people trying to fix an issue for k-12 AI adoption. I had to inject myself into the conversation as their solution was truly interesting in how it worked, but then I found out that neither of them were really customers, they had build an app and were getting it in the hands of people.

AI truly has brought a season of people building solutions through Claude Code, or other solutions, and those solutions are built by individuals, and not ISV’s and those solutions are being advertised wherever they can be.

Side-note on Travel/Passes

Expo passes were the majority of people at the conference. With three theatres (maybe two), and all the expos around including the main floor, the side room which included startups and the outside pavilion, they were able to meet all the people they could want, and listen to sessions in the expo(some of which were pretty interesting and better than the side room content). If your going to GTC, probably something to think about. Also, book your hotel now. Its always good to remember that you can book a hotel and it will hold your reservation, but not charge you, so you can still remove the hotel if you cant make it the next year normally before 24 hours. Always good to keep in mind with conferences.

Conclusion

Though I spent the majority of my time outside in a booth, I did really enjoy my time there. You can feel the excitement in the air from the caffeinated folks looking forward to the next new thing. GTC really needs to move to Vegas, as there was just SO many people there(this claustrophobic kid had issues multiple times), but it wont be there for the next couple years as the agreement with NVIDIA is to stay in San Jose. The discussions I had with folks were so interesting, the students learning and working to find new areas to develop and grow were amazing to me. Even though there was a heat surge outside, GTC staff was always passing out water, and keeping folks hydrated. I hope to make it back out there next year, and hopefully spend more time with the people, and the tech than I was able to this time. We shall see.